TL;DR - Key Takeaways

- On average, enterprise companies have 709,533 publicly exposed Google Drive assets containing sensitive data - accessible to anyone with a link (DoControl, 2024)

- Google secures the infrastructure. Your team is responsible for permissions, access controls, and user behavior - this is the shared responsibility model

- The biggest risks are employee misuse, oversharing, misconfigured permissions, over-permissioned third-party apps, account takeovers, and incomplete employee offboarding

- Native Google Drive features are a solid baseline, but do not provide bulk remediation, real-time behavioral monitoring, or granular insider threat detection

- Organizations subject to HIPAA, GDPR, CCPA, SOC 2, or FINRA must implement additional controls beyond Google's defaults to remain compliant

In 2026, Google Drive is the backbone of business operations for millions of organizations worldwide. But with 2 billion active monthly users trusting it with everything from financial statements to medical records, one question keeps security and IT teams up at night: how secure is it, really?

A recent analysis by DoControl of enterprise-level Google Workspace clients uncovered alarming data. On average, companies had 709,533 publicly exposed Google Drive assets containing sensitive information - accessible to anyone with the link. The exposure from insider threats was even more troubling, with organizations averaging 120,000 sensitive assets downloaded and shared to personal email addresses. And 94,000 assets remained exposed to former employees who had already left the company.

This guide covers everything you need to know about Google Drive security in 2026: how it works, where it falls short, how to natively configure it properly in the Admin Console, and what additional controls enterprise organizations need to close the gaps Google's native tools leave open.

Is Google Drive secure?

The short answer: Google Drive is generally secure - but whether it is secure enough for your organization depends heavily on how it is configured and managed.

Google Drive is built on Google's robust cloud infrastructure and includes strong encryption, access controls, and continuous monitoring. Files are encrypted both in transit using Transport Layer Security (TLS) and at rest using AES-256, the same encryption standard used by governments and financial institutions for classified information. Google also applies zero-trust architecture principles and runs continuous security monitoring across its systems.

For most organizations, these built-in protections are a solid starting point. But security is not just about technology - it is about how people use it. Even the strongest platform cannot protect you from accidental oversharing, misconfigured permissions, or risky third-party apps. That is where Google Drive's real vulnerabilities lie.

"Even with good intentions, aligning Google Drive's sharing policies with security best practices is tough. There's too much data to check manually, and the rate of collaboration makes it hard to keep up over time."

How Google Drive security works: the shared responsibility model

Like all cloud services, Google Drive operates under a shared responsibility model. Google is responsible for the security of the infrastructure. You - your IT and security team - are responsible for everything that happens within it.

What Google protects

Google's primary responsibility is infrastructure security and data protection through encryption. All files stored in Google Drive are encrypted at rest (AES-256) and in transit (TLS). By default, files are private unless explicitly shared, providing a baseline defense against accidental exposure.

Google also proactively scans externally shared files for malware and phishing threats, blocking access when a risk is detected. Its security infrastructure uses machine learning to detect suspicious activity across systems.

However, Google does not provide zero-knowledge encryption. Google Drive encryption is not zero-knowledge encryption. This means Google retains access to the encryption keys and could decrypt data if required by law or if compromised in a breach. Organizations handling highly sensitive documents should consider client-side encryption, which ensures only the end user controls the decryption keys.

What admins are responsible for

Admins - typically IT or security teams - are responsible for enforcing security settings and policies across the organization. This includes:

- Access controls: restricting re-sharing, downloading, printing, and permission changes to prevent unauthorized data exposure

- DLP policy configuration: setting up Data Loss Prevention rules to scan for sensitive data types and trigger automated responses

- Endpoint management: enforcing device encryption, screen locks, remote signouts, and remote wipes for lost or stolen devices

- Security monitoring: using audit logs, security reports, alerts, and automated remediation workflows to detect suspicious activity, unauthorized file access, and eliminate overexposure in real time

- Third-party app governance: regularly auditing OAuth app permissions and revoking unnecessary access

- Offboarding enforcement: revoking all Drive access for departing or former employees immediately

What end users are responsible for

Individual users play a crucial role in keeping shared files secure. Key responsibilities include maintaining strong, unique passwords and using a password manager, enabling multi-factor authentication (MFA) on their Google account, and being vigilant about sharing settings - particularly avoiding the "Anyone with the link" option for sensitive documents.

A single misconfiguration by a well-meaning employee (like setting a confidential document to publicly accessible) can expose critical data to the entire internet, with operational and regulatory consequences.

End users - or employees - are often the weakest link in the security chain. 95% of security incidents stem from human error, and this is only getting worse with the introduction of non-human identities. In fact, in Google Drive, 40% of all events are done by non-human AI identities. As a result of this, security teams often need more than Google Drive’s baseline controls to ensure that end users and employees don't inadvertently add any further risk to the environment.

The most common Google Drive security threats

While Google Drive's built-in features protect against many risks, several threat categories consistently put enterprise data at risk. Understanding these is the first step to addressing them.

1. Phishing, credential theft, and account takeover (ATO)

Phishing attacks targeting Google Drive users typically involve fraudulent communications that mimic legitimate Google notifications to steal user credentials and compromise the environment. But the more dangerous evolution of this threat is account takeover (ATO) - where an attacker gains persistent control of a Google account rather than simply harvesting credentials in a one-off attack.

With ATO, an attacker can access and exfiltrate sensitive files over an extended period, change sharing settings to expose data externally, or manipulate financial documents - all without triggering obvious alerts.

Mitigations include enforcing MFA for all accounts, monitoring for unusual login activity and unfamiliar device sign-ins, using identity threat detection & response (ITDR) tools to flag suspicious login attempts, and running regular simulated phishing exercises to build employee awareness.

2. Oversharing and misconfigured access

Users frequently publicly share files with "Anyone with the link" out of convenience, unknowingly making sensitive documents publicly accessible. Without strict, granular access controls and visibility into sharing activity, confidential information - financial documents, customer data, HR records - can be left exposed to the public internet.

As organizations grow, tracking who has access to what becomes a major operational challenge. A finance team's budget spreadsheet accidentally shared company-wide can go unnoticed for months without the right monitoring tools in place.

3. Malicious and over-permissioned third-party apps

Employees regularly connect third-party applications to Google Drive to boost productivity. But these apps can request broad permissions or become compromised, acting as a conduit for data breaches. Even legitimate apps may have vulnerabilities that, if exploited, lead to unauthorized data access.

Regular audits of OAuth app permissions are essential. Admins should review which apps have access to Drive data, understand the scope of permissions each app has, and revoke access for any apps that are unused, unnecessary, or overly permissive.

4. Incomplete offboarding and residual access

Failing to revoke access for former employees or contractors is one of the most common and consequential security gaps in Google Drive environments. DoControl data found that 94,000 assets remain exposed to former employees on average across enterprise organizations. These individuals can still access, modify, or share critical company data - and in many cases, do.

A strict offboarding process should ensure all accounts, application access, and Drive permissions are fully deactivated on the employee's last day, not days or weeks later.

5. Ransomware and malware

Ransomware can reach Google Drive through synced endpoint devices. If a device becomes infected, the ransomware can encrypt local files that are then synced to Drive, spreading the encrypted versions across the organization's shared environment.

Google Drive's file versioning feature provides a partial mitigation - if a file is encrypted by ransomware, users can restore a previous version from before the infection. However, version history is not a full backup solution, and proactive measures like endpoint protection, behavioral monitoring, and regular security audits remain essential. No cloud service is completely free from ransomware risk.

6. Lack of visibility across the Drive environment

One of the most underappreciated Google Drive security challenges is not a specific threat - it is an absence of information. Security teams often struggle to answer basic questions: What sensitive data exists in Drive? Who owns it, and who has access - internally and externally? How do we monitor access patterns over time?

Without continuous visibility into sharing configurations and user behavior, exposure accumulates silently. By the time a data leak is discovered, the damage may already have been done.

How to secure Google Drive: the complete checklist

Protecting Google Drive requires a layered approach combining access controls, user education, and proactive monitoring. Use the following checklist to systematically reduce your organization's exposure.

- Configure Information Rights Management (IRM): set Google Workspace policies to restrict re-sharing, downloading, printing, copying, and permission changes to reduce accidental or intentional data exposure

- Enforce Multi-Factor Authentication (MFA): require MFA for all user accounts - even if credentials are compromised, attackers cannot access accounts without a second verification step

- Apply the principle of least privilege: give each user only the minimum Drive permissions they need. Grant Viewer or Commenter roles by default rather than Editor or Owner. Regularly review and revoke access that is no longer needed

- Educate end users on secure file sharing: train users on proper sharing settings, the risks of public links, and how to recognize phishing attempts targeting Google credentials

- Enable security alerts for suspicious activity: configure audit log filters and email alerts for anomalous behavior - mass downloads, unusual external shares, permission changes by non-admins

- Implement endpoint management: enforce device encryption, screen locks, password requirements, and enable remote signout and remote wipe capabilities for lost or stolen devices

- Review and remove over-permissioned third-party apps: conduct regular audits of OAuth apps connected to Drive, revoking any that are unused, unnecessary, or requesting excessive permissions

- Deploy an SaaS Security Posture Management (SSPM) tool or a SaaS DLP: either of these tools enhance Google Drive security by detecting data leaks, preventing unauthorized sharing, monitoring user behavior, providing visibility into third-party app risk, and more - embedding a layer of control and remediation to the Google Workspace environment

Step-by-step: how to audit and remediate Google Drive security

Use this process for your initial security audit, then run it on a quarterly basis to keep exposure under control.

- Step 1: Export your Drive sharing report from Admin Console > Reporting > Audit > Drive. Filter for external shares and public links.

- Step 2: Identify all files and folders shared with "Anyone with the link" or shared externally outside your domain.

- Step 3: Flag files containing sensitive data - SSNs, financial records, health information, legal documents - using DLP scanning or manual review.

- Step 4: Revoke public links and remove unnecessary external shares. For large environments, use a third-party tool to do this at scale.

- Step 5: Review all OAuth apps with access to Drive data in Admin Console > Security > API controls. Revoke high-risk or unused app permissions.

- Step 6: Audit former employee accounts. Identify any accounts that retain Drive access post-departure and revoke immediately.

- Step 7: Set up ongoing automated alerts for new risky sharing events so future exposure is caught in real time rather than retrospectively.

Google Drive security settings: Admin Console walkthrough

Google Workspace admins have significant control over Drive security through the Admin Console. The following are the most critical settings to configure, with the exact navigation paths.

Sharing settings

Navigate to: Admin Console > Apps > Google Workspace > Drive and Docs > Sharing settings

- Set the default sharing setting to Restricted (only people explicitly added can access)

- Control external sharing by domain allowlist - specify which external domains users are permitted to share with

- Disable "Anyone with the link" as an organization-wide default

- In Drive's advanced sharing settings, uncheck the option that allows editors to add new users or change permissions

Data Loss Prevention (DLP) rules

Navigate to: Admin Console > Security > Data Loss Prevention

- Define content detectors for your organization's sensitive data types: Social Security numbers, credit card numbers, bank account numbers, PHI, PII

- Set rule actions: block sharing, display a warning to the user, or trigger an admin alert depending on sensitivity level

- Schedule regular DLP policy reviews - data types and business needs evolve, and policies should keep pace

- Note: Google's native DLP has limitations - it does not cover all file types, does not apply retroactively to historical files, and lacks granular behavioral context

Drive audit log

Navigate to: Admin Console > Reporting > Audit > Drive

- Enable full audit logging for all Drive activity - file creation, edits, shares, downloads, permission changes

- Set up filters for high-risk events: downloads of large numbers of files, external shares of flagged file types, permission changes made by non-admin users

- Configure email-based alerts to notify the security team of anomalous activity in real time

- Review logs regularly - without active monitoring, audit logs are data you have but never act on

Third-party app controls

Navigate to: Admin Console > Security > API controls

- Review all OAuth apps that have been granted access to Google Drive data

- Restrict high-risk OAuth scopes - apps requesting full Drive read/write access warrant extra scrutiny

- Consider enabling admin approval for new third-party app installs to prevent shadow IT accumulation

- Remove access for apps that are no longer actively used

Endpoint verification

Navigate to: Admin Console > Devices > Endpoints

- Enforce device encryption for all endpoints accessing Google Drive

- Require screen lock and password enforcement on mobile devices

- Enable remote signout and remote wipe for lost or stolen devices

- Consider Context-Aware Access policies to restrict Drive access from unmanaged or non-compliant devices

Advanced Google Drive security features worth knowing

Google Vault: retention, eDiscovery, and legal holds

Google Vault is Google Workspace's information governance tool, available on select Workspace editions. It allows organizations to retain, search, and export data from Drive for legal, compliance, and audit purposes.

Key capabilities include setting retention policies (automatically retain Drive data for a specified period or delete it after a set time), placing legal holds on individual users' Drive data to prevent deletion during litigation, running eDiscovery searches across Drive content, and exporting data in formats suitable for legal review.

For organizations in regulated industries or with legal discovery obligations, Vault is an essential layer of governance that goes beyond what the Drive audit log provides.

Context-Aware Access and zero-trust policies

Context-Aware Access is Google's implementation of zero-trust principles for Workspace, powered by Google's BeyondCorp framework. Rather than granting access based purely on identity, it evaluates context - the user's device, location, and security posture - before allowing access to Drive.

Admins can create access levels that define conditions (e.g., only managed devices running a current OS version, only access from within corporate IP ranges) and apply these to Drive access policies. This significantly reduces the risk of account takeover, since an attacker with valid credentials but an unrecognized device or location is blocked from accessing data.

Drive labels and data classification

Google Drive labels allow organizations to apply sensitivity tags to files - Confidential, Internal Only, PII, Public - either manually or via Google's AI-powered automatic classification. Labels integrate directly with DLP rules, allowing admins to apply stricter sharing controls to files tagged as sensitive automatically.

A well-implemented labeling strategy is foundational to a data classification program and helps ensure that compliance requirements (like not sharing PHI or PII externally) are enforced at the file level, not just at the policy level.

Is Google Drive compliant with GDPR, CCPA, HIPAA, SOC 2, and FINRA?

Google's privacy framework aligns with major global data protection regulations. Google Workspace provides some built-in compliance tools including AI-powered data classification, audit logging, and DLP. However, compliance is never automatic - it requires deliberate configuration, access control policies, and data protection practices at the organizational level.

GDPR

Google Drive supports GDPR compliance through encryption, access controls, and audit logs that help meet data protection and security standards. Organizations must configure these tools appropriately and ensure their own data handling practices - access permissions, retention policies, data subject request workflows - align with GDPR obligations.

CCPA

For organizations subject to the California Consumer Privacy Act, Google Workspace provides the tools to help manage consumer data access, deletion requests, and opt-out rights. Again, the organization is responsible for configuring these tools and maintaining appropriate data inventories.

SOC 2

Google Workspace holds SOC 2 Type II certification, demonstrating that its systems meet the Trust Services Criteria for security, availability, and confidentiality. Organizations pursuing their own SOC 2 certification must ensure their internal use of Drive - access controls, monitoring, incident response - also meets these criteria.

HIPAA

Healthcare organizations and their business associates can use Google Drive for data that falls under HIPAA, provided they sign a Business Associate Agreement (BAA) with Google. The BAA is available to Google Workspace for Healthcare customers and establishes Google's responsibilities for protecting Protected Health Information (PHI).

However, a BAA alone does not make Google Drive HIPAA compliant - the covered entity is responsible for configuring appropriate access controls, audit logging, and encryption to meet the Security Rule's requirements. Organizations handling PHI, PII (Personally Identifiable Information), PCI data (payment card information), or other regulated data types should conduct a formal risk assessment and implement additional controls beyond Google's defaults.

FINRA

Financial services organizations subject to FINRA regulations face specific data retention and supervision requirements. Google Vault can help meet retention obligations for Drive data. However, firms should evaluate whether Google's native capabilities meet their specific supervisory and archiving requirements, or whether additional third-party solutions are needed.

Why employee behavior remains the biggest Google Drive security risk

Technology can only go so far. Across all the threat categories above, the most common root cause is not a sophisticated external attack - it is an employee taking action. It could be a well-intentioned (but risky) decision - like accidentally setting a file to public.

Or, it could be malicious and more sinister - like sharing sensitive files to a personal email before leaving the company for a competitor. The amount of insider threat incidents that happen within Google Workspace increases every year. In fact, 76% of organizations have detected increased insider threat activity over the past five years, but less than 30% believe they’re equipped with the right tools to handle it.

Additionally, employees regularly collaborate with contractors, vendors, and third-party agencies through Google Drive. When projects end, access to Docs or Sheets containing sensitive information often is not revoked. This leaves sensitive company data exposed to external parties indefinitely - and typically without the organization's awareness.

The remediation challenge compounds this problem. Google's native capabilities do not allow for bulk unsharing of historical files or bulk revocation of over-permissioned third-party apps at scale. Without regular audits and automated remediation tools, data exposure accumulates over time and becomes increasingly difficult to address.

Once a file is exposed, there is no way to remediate the exposure by just using Google’s native security features alone. However, this is not Google’s fault. They’re not a security company - and they shouldn’t be expected to offer every single security solution natively.

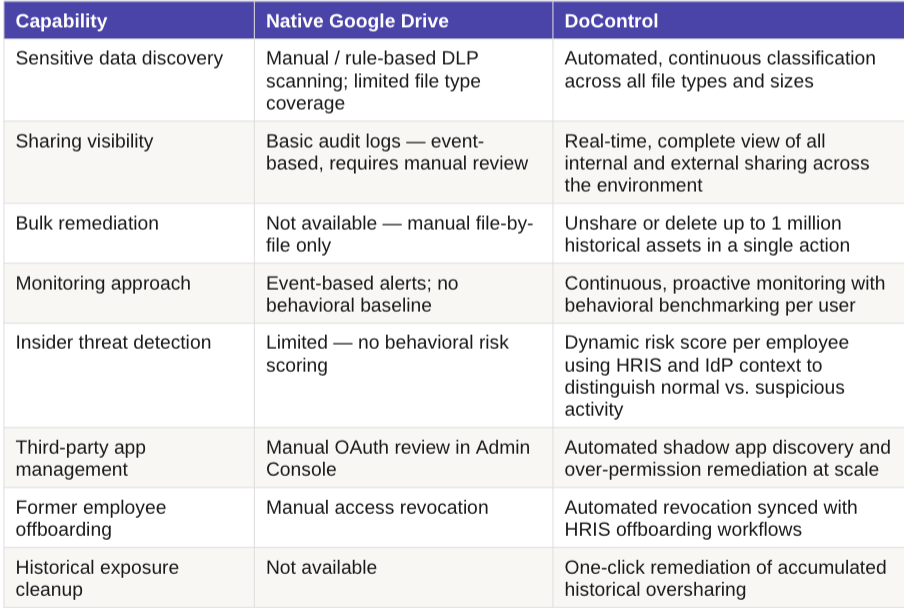

Native Google Drive security vs. DoControl

Google's native security tools provide a solid foundation. But enterprise organizations typically need additional capabilities to address behavioral risk, achieve real-time visibility, and remediate exposure at scale. The table below summarizes where native Google Drive capabilities end and where additional controls add value.

How DoControl mitigates Google Drive security risks

DoControl provides visibility, automation, and enforcement to ensure employee behavior does not become a security liability. Working continuously in the background, DoControl addresses the gaps that Google's native tools leave open.

- Complete behavioral visibility: DoControl offers complete data access governance; customers can see who is sharing what, when, and with whom - in real time across your entire Google Drive environment

- HRIS and IdP integration: sync Google Workspace with your HRIS and identity systems to assess whether file-sharing actions are routine or anomalous based on role, department, employment status, and other factors

- Dynamic risk scoring: each employee receives a real-time risk score based on their file-sharing behavior and contextual data from connected systems

- Bulk historical remediation: unshare or delete up to one million historical assets in a single click, eliminating the long tail of accumulated exposure

- Automated continuous remediation workflows: custom security workflows detect suspicious activity, trigger off specific events or scenarios, and either remediate automatically or escalate to IT with actionable alerts - no manual triage required

DoControl secures every layer of the Google Drive attack surface - data, identities, configurations, and connected applications - through four core capabilities:

- Data Access Governance + DLP: continuous data classification, automated threat detection, and real-time prevention of unauthorized access and data leaks

- Identity Threat Detection & Response (ITDR) + Insider Risk Management: behavioral benchmarking and cross-system context to differentiate normal activity from genuine insider threats

- Shadow App Discovery & Remediation: automated identification and removal of risky OAuth applications and over-permissioned third-party integrations

- SaaS Misconfiguration Management: continuous audits of Google Workspace admin configurations against CIS benchmarks, with automated remediation recommendations

Make Google Drive security a priority

Google Drive holds the highest market share of any cloud file-sharing platform globally. Organizations rely on it daily - and when secured properly, it delivers exceptional collaboration with strong baseline protections. But as this guide demonstrates, the baseline is not enough on its own.

Securing Google Drive is an ongoing process, not a one-time configuration. It requires continuous visibility into sharing behavior, regular audits of permissions and third-party apps, a clear compliance posture, and the right tools to remediate exposure before it becomes a breach.

By leveraging Google's built-in protections, filling the gaps with solutions like DoControl, and building a culture of security-aware sharing, organizations can give their teams the collaboration tools they need without the security and compliance risks that come with unmanaged exposure.

Frequently asked questions

Is Google Drive safe for businesses?

Google Drive offers strong baseline security features - encryption, access controls, and DLP tools - but those alone are not sufficient for most enterprise needs. The biggest challenge is not the platform itself; it is how users and employees interact with it. Without visibility into file sharing, external access, and third-party integrations, sensitive data can slip through the cracks. With the right security controls and monitoring in place, Google Drive can absolutely support secure, compliant business operations.

What are the biggest Google Drive security risks?

The most common risks are overshared files, unmanaged external collaborators, account takeovers, and excessive third-party app access. Users can easily share sensitive data externally - intentionally or not - through public links or personal accounts. Without automated monitoring and remediation, these risks accumulate over time, leaving organizations progressively more exposed.

Who is responsible for Google Drive security?

Both Google and your organization share responsibility under the shared responsibility model. Google protects the underlying infrastructure, handles encryption, and maintains its servers. Your organization is responsible for managing user access, setting sharing permissions, training employees, and deploying security tools to protect your data. How data is used, shared, and governed within Drive is entirely your team's responsibility.

How do I prevent data leaks in Google Drive?

Admins can set up strong sharing policies, configure Data Loss Prevention (DLP) rules, and regularly audit shared files and permissions. Enforcing MFA, training users on secure sharing practices, and enabling alerts for risky sharing events all reduce the risk of accidental leaks. For enterprise environments, a third-party security solution that provides bulk remediation and real-time behavioral monitoring is strongly recommended.

Does Google Drive protect against ransomware?

Google Drive provides partial protection through its file versioning feature - if a file is encrypted by ransomware, users can restore a previous version from before the infection occurred. However, this is not a full backup solution. If ransomware spreads across synced files before it is detected, version history may be insufficient. Proactive endpoint protection, behavioral monitoring, and regular security audits remain essential defenses.

Is Google Drive HIPAA compliant?

Google Drive can be used for HIPAA-covered data if your organization has signed a Business Associate Agreement (BAA) with Google, which is available to Google Workspace for Healthcare customers. However, the BAA alone does not make your organization HIPAA compliant - you must also configure appropriate access controls, enable audit logging, and implement additional safeguards to meet the Security Rule's requirements for protecting PHI.

Can everyone see my Google Drive files?

No. By default, Google Drive files are private and only visible to you. Others can only access your files if you explicitly share them - either by adding specific people, sharing via a restricted link, or (most riskily) setting sharing to "Anyone with the link." The danger comes when users change these defaults without fully understanding the implications.

What are the disadvantages of Google Drive for security?

Google Drive's primary security disadvantages are its default sharing settings, the ease with which users can overshare, the lack of bulk remediation for historical exposure, limited behavioral monitoring, and incomplete DLP coverage (not all file types, no retroactive scanning). These gaps are manageable with the right configuration and supplementary tools - but they require deliberate effort and cannot be addressed through Google's native capabilities alone.

Want to Learn More?

See a demo - click here

Get a FREE Google workspace security risk assessment - click here

See our product in action - click here