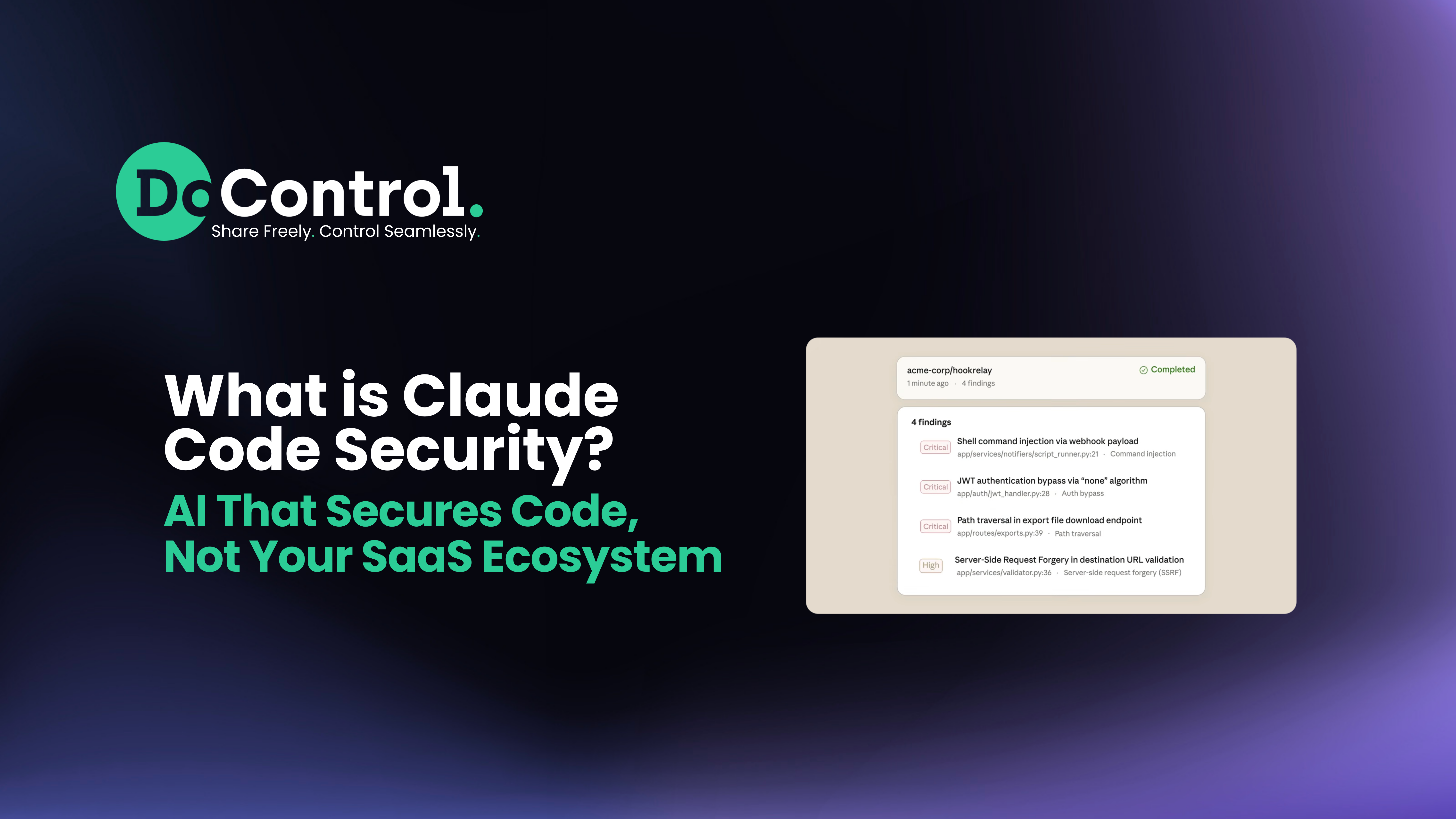

Over the weekend, Anthropic announced a limited research preview of Claude Code Security - a new capability built into Claude Code that scans codebases for complex vulnerabilities and suggests targeted patches for human review.

It marks a significant milestone and is taking the security world by storm. CrowdStrike (CRWD) closed the trading session down more than 9% on Friday. Several other cybersecurity companies also reported share price drops. SailPoint Technologies (SAIL) fell more than 9% by the end of Friday’s trading session, and Cloudflare (NET) declined just over 7%.

Why is this? Many investors are driven by fear. A large majority of them also may think that there is no need for cybersecurity products now that Anthropic is launching these capabilities. But it's important to educate the market completely on what this innovation by Anthropic actually means, what it entails, and who it affects.

So, what is Claude Code Security?

Claude Code Security moves beyond the traditional rule-based static analysis of code. Instead of simply matching known vulnerability patterns, it reasons about how components interact, how data flows, and where subtle business logic flaws may exist.

Anthropic reported that its latest model found more than 500 previously undetected vulnerabilities in production open-source code - some that had gone unnoticed for decades.

This is real progress.

But it’s also important to understand what this announcement is, and what it isn’t. There is still a tremendous need for cybersecurity. Actually, it’s never been greater.

AI Is Transforming Code Security, Not Replacing Cybersecurity

There’s a growing narrative in the industry that AI is “taking over” security. That capabilities like this mean cybersecurity teams will shrink, security vendors will disappear, and machines will replace practitioners.

That’s not what this announcement implies at all.

What it does say is this: AI is accelerating vulnerability discovery. It’s compressing timelines. It’s increasing the velocity at which both defenders and attackers can identify weaknesses in code.

That doesn’t reduce the need for security.

It increases it.

AI is transforming code security. But AI will never replace cybersecurity systems and methods. Instead, it is actually expanding the surface area those systems must protect.

Securing Code Before It Ships vs. Securing What Happens After

Claude is securing the code, which is critical. Obviously, the vulnerabilities caught before deployment reduce the risks. And, AI-assisted static analysis will likely become standard across development pipelines.

But once that code ships, an entirely different layer of risk begins.

Applications don’t run in isolation…

- They connect to SaaS platforms.

- They integrate with third-party tools.

- They inherit human permissions.

- They access sensitive data.

- They are powered or augmented by AI agents that operate with broad scopes of authority and permissions.

THIS is where the conversation must evolve:

Claude secures code BEFORE it ships. SaaS security platforms secure the environment AFTER it runs, continuously and contextually.

Once deployed, risk is no longer about a line of code. It’s about:

- Who has access.

- Which AI apps are connected.

- What permissions they inherit.

- How data moves between systems.

- Whether insiders are misusing access.

- Whether AI agents are over-permissioned.

- Whether new paths to data exfiltration have been introduced.

That ecosystem-level risk does not disappear because vulnerabilities were patched at build time. This type of risk management and the remediation needed to eliminate this type of exposure cannot be replaced simply by Anthropic.

AI Changes How Systems Access Data

The deeper shift happening in security is not just AI scanning code. It’s AI interacting with enterprise environments.

AI agents introduce new attack vectors. Nonhuman identities create entirely new risk profiles. Third-party AI apps may also access sensitive company data. AI applications often operate with human-level permissions, while AI-powered, “vibecoded” workflows can automate privileged actions at scale.

This is why framing AI as a replacement for cybersecurity misses the point.

AI doesn’t eliminate risk, it simply reshapes what it looks like and demands more from security teams and security vendors.

From Fear to Architecture

There’s a noticeable fear mindset emerging in the industry - that AI is here to take over everything. That practitioners will be replaced. That security vendors will be displaced.

But what Anthropic has released is not an autonomous security replacement system. It’s a powerful tool that still requires human validation, confidence ratings, and approval workflows.

There still needs to be a human in the loop, and Anthropic states that clearly, “It [Claude Code Security] scans codebases for security vulnerabilities and suggests targeted software patches for human review.”

Additionally, one area that will not be displaced is the vendor side of the house. While some vendors in AppSec who are responsible for securing code and delivering similar value-add services may be impacted - depending on how differentiated and valuable their products truly are - the companies currently being questioned, and whose stocks are declining, typically operate further downstream in the process.

Once code is secure, it moves into the environment itself. At that stage, third-party vendors and tools are still essential. They secure identities, monitor activity, detect suspicious logins, launch DLP workflows, enforce least-privilege controls, remediate exposure…the list goes on.

The misconception around this launch from Anthropic is that it (and other AI models) will replace cybersecurity as a whole. In reality, it addresses only one piece of a much larger puzzle.

The Broader Transformation

At DoControl, we’ve been studying the broader implications of AI in security for years. This is an important milestone - but it’s one piece of a larger transformation.

AI-assisted code scanning strengthens one layer of the stack. But the broader SaaS ecosystem - where data lives, where permissions sprawl, where insiders operate, and where AI apps integrate - still requires continuous oversight and remediation.

The innovations coming from Anthropic and others do not signal that we need less security software. On the contrary, we need more:

- More visibility into SaaS environments.

- More control over AI application permissions.

- More monitoring of insider activity.

- More governance over how AI systems access enterprise data.

As AI accelerates software creation and vulnerability discovery, it simultaneously expands the number of systems interacting with sensitive information.

Securing code is foundational. Securing the ecosystem around that code is ongoing.

Claude is helping secure what gets built.

The next challenge - and the larger one that will persist forever - is securing everything that happens after it runs.